Filling In Json Template Llm

Filling In Json Template Llm - With openai, your best bet is to give a few examples as part of the prompt. You can specify different data types such as strings, numbers, arrays, objects, but also constraints or presence validation. You want to deploy an llm application at production to extract structured information from unstructured data in json format. However, the process of incorporating variable. I would pick some rare. It can also create intricate schemas, working faster and more accurately than standard generation. Any suggested tool for manually reviewing/correcting json data for training?

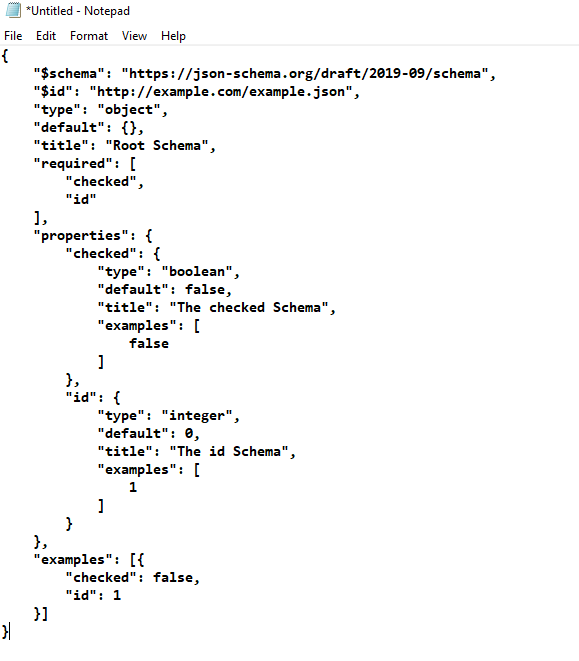

Let’s take a look through an example main.py. You want to deploy an llm application at production to extract structured information from unstructured data in json format. Not only does this guarantee your output is json, it lowers your generation cost and latency by filling in many of the repetitive schema tokens without passing them through. Llm_template enables the generation of robust json outputs from any instruction model.

Vertex ai now has two new features, response_mime_type and response_schema that helps to restrict the llm outputs to a certain format. You want the generated information to be. With your own local model, you can modify the code to force certain tokens to be output. This allows the model to. Llm_template enables the generation of robust json outputs from any instruction model. Is there any way i can force the llm to generate a json with correct syntax and fields?

Let’s take a look through an example main.py. Super json mode is a python framework that enables the efficient creation of structured output from an llm by breaking up a target schema into atomic components and then performing. In this article, we are going to talk about three tools that can, at least in theory, force any local llm to produce structured json output: You can specify different data types such as strings, numbers, arrays, objects, but also constraints or presence validation. Learn how to implement this in practice.

Super json mode is a python framework that enables the efficient creation of structured output from an llm by breaking up a target schema into atomic components and then performing. Is there any way i can force the llm to generate a json with correct syntax and fields? It can also create intricate schemas, working faster and more accurately than standard generation. Lm format enforcer, outlines, and.

It Can Also Create Intricate Schemas, Working.

It can also create intricate schemas, working faster and more accurately than standard generation. Defines a json schema using zod. Understand how to make sure llm outputs are valid json, and valid against a specific json schema. I would pick some rare.

This Allows The Model To.

For example, if i want the json object to have a. You want the generated information to be. With openai, your best bet is to give a few examples as part of the prompt. By facilitating easy customization and iteration on llm applications, deepeval enhances the reliability and effectiveness of ai models in various contexts.

Any Suggested Tool For Manually Reviewing/Correcting Json Data For Training?

Not only does this guarantee your output is json, it lowers your generation cost and latency by filling in many of the repetitive schema tokens without passing them through. Let’s take a look through an example main.py. Is there any way i can force the llm to generate a json with correct syntax and fields? Llm_template enables the generation of robust json outputs from any instruction model.

With Your Own Local Model, You Can Modify The Code To Force Certain Tokens To Be Output.

In this blog post, i will guide you through the process of ensuring that you receive only json responses from any llm (large language model). Vertex ai now has two new features, response_mime_type and response_schema that helps to restrict the llm outputs to a certain format. In this article, we are going to talk about three tools that can, at least in theory, force any local llm to produce structured json output: Learn how to implement this in practice.

It can also create intricate schemas, working faster and more accurately than standard generation. With openai, your best bet is to give a few examples as part of the prompt. Learn how to implement this in practice. Understand how to make sure llm outputs are valid json, and valid against a specific json schema. However, the process of incorporating variable.